Parallel Corpora as Infrastructure for Natively Localized AI

Pangeanic approaches parallel corpora creation and processing as an infrastructural discipline for multilingual AI. From multilingual data sourcing to precise alignment, linguistic validation, terminology control, and data governance, each layer is designed to reduce noise and preserve semantic correspondence across languages.

The result is not simply bilingual text. It is structured multilingual intelligence ready to support machine translation, retrieval across languages, language model adaptation, evaluation workflows, and production AI systems under real deployment conditions.

What are parallel corpora and why do they matter for multilingual AI?

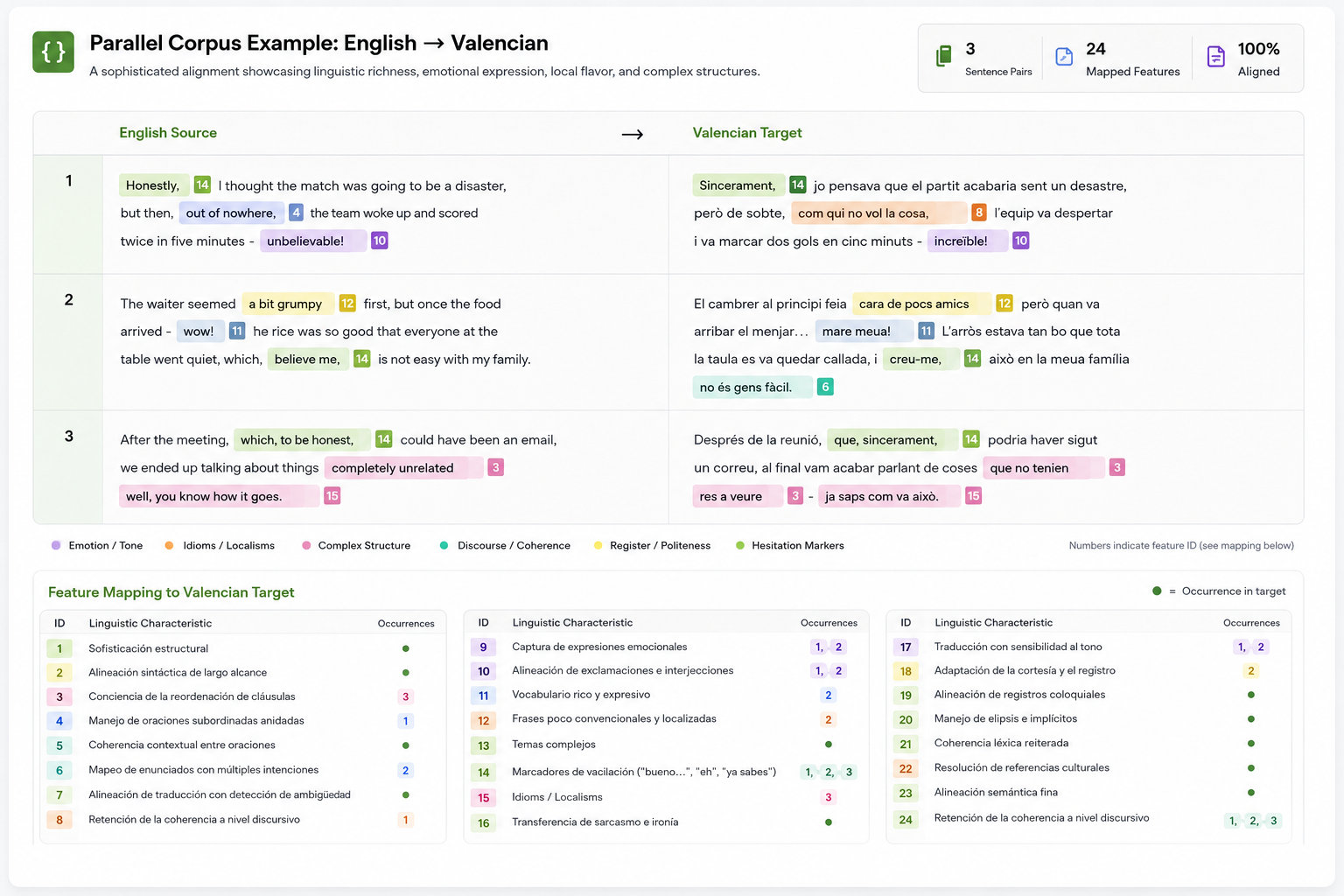

Parallel corpora are structured collections of translated text aligned at the sentence or phrase level across two or more languages. They are essential for machine translation, multilingual AI, language model adaptation and evaluation because they show how meaning, terminology and structure correspond across languages.

Their relevance expanded with statistical and, later, neural machine translation, where alignment quality directly shapes model behavior. In practice, these datasets now extend beyond translation systems, supporting multilingual language models, natural language generation, retrieval across languages and enterprise AI evaluation workflows.

Public resources such as parliamentary proceedings or institutional corpora offer useful baselines, but they are often limited to specific domains and require extensive cleaning, filtering and normalization. In production environments, parallel data is less about availability and more about alignment precision, domain relevance, terminology consistency and operational readiness.

Why are parallel corpora still essential for advanced translation systems?

Parallel corpora remain essential because advanced translation systems still need trusted bilingual evidence. They provide aligned source and target text for model adaptation, terminology control, quality evaluation, domain coverage, privacy focused deployment and continuous improvement through human review.

Pangeanic has worked with parallel corpora at industrial scale for machine translation, multilingual AI and public sector language infrastructure. In 2020, Slator reported that Pangeanic had surpassed 10 billion aligned data segments in 84 languages. European projects such as NTEU also required large scale corpus building, data collation and data release across EU language combinations, supporting the creation of neural translation engines and reusable multilingual resources.

Training and model adaptation

Even strong pretrained systems need adaptation for legal, medical, financial, technical or media content. Parallel corpora help models learn the terminology, style and translation patterns required by a specific organization, domain or sector.

Translation quality evaluation

Translation quality cannot be measured only by fluent output. Evaluation needs reference translations, human judgment and metrics that compare the source, the machine output and the expected target language result.

Terminology consistency

Enterprises often need translations that are not only correct, but consistent. Product names, legal terms, safety instructions, claims language and brand expressions must be rendered the same way across documents, markets and channels.

Less represented languages and niche domains

Generic translation systems often struggle when language coverage is limited or when the content belongs to a narrow professional field. Curated parallel corpora provide the missing evidence needed for better coverage and safer output.

Data control and privacy

Regulated organizations cannot always send sensitive text to external translation APIs. Internal parallel corpora allow them to improve private systems while preserving control over content, access, retention and governance.

Continuous improvement loops

Human corrections, reviewer feedback and approved translations become new aligned examples. Over time, this creates a practical improvement loop that strengthens translation quality, terminology precision and model reliability.

Sources: Slator on Pangeanic crossing 10 billion aligned data segments; Pangeanic NTEU project information; OPUS open parallel corpus collection; WMT General Machine Translation shared task; and COMET research on machine translation evaluation using source text, machine output and reference translations. Slator, NTEU, OPUS, WMT, COMET.

What makes a parallel corpus useful as an operational training asset?

A parallel corpus becomes useful as an operational training asset when translated text is aligned, verified, cleaned, labeled, documented, and prepared for a specific model or workflow. Its value depends not only on the number of segments, but also on alignment quality, domain fit, language coverage, metadata, provenance, and safe reuse in production systems.

Pangeanic structures parallel corpora as part of a wider multilingual data operation. This includes corpus sourcing, translation memory cleaning, bilingual alignment, linguistic review, terminology control, metadata preparation, data governance, and integration into machine translation, multilingual AI, and evaluation workflows. This operational approach has supported large corpus initiatives, including Pangeanic’s 10 billion alignment milestone and European projects such as NTEU.

Alignment quality

Reliable parallel corpora depend on precise alignment between the source text and the target text. Sentence level and phrase level matching must preserve meaning, terminology, structure, and context, especially when the data will be used to train or evaluate translation systems.

Linguistic verification

Human review remains important because aligned data can still contain omissions, mistranslations, formatting noise, duplicated content, or inconsistent terminology. Linguistic verification helps turn raw bilingual material into dependable training evidence.

Scale and availability

Effective multilingual AI requires volume, but useful parallel data remains scarce in many languages and domains. Corpus operations therefore need sourcing, cleaning, deduplication, and controlled expansion across the language pairs and sectors that matter to the model.

Domain relevance

A corpus for legal contracts, medical content, software strings, public administration, or customer support cannot be treated as interchangeable text. Domain relevance determines whether a model learns useful translation behavior or generic language patterns.

Linguistic diversity

Translation data should reflect language variation across regions, registers, document types, and use cases. This helps models handle multilingual environments where the same language can vary by market, audience, dialect, and professional context.

Operational governance

Corpora used in production systems require clear rules for provenance, access, filtering, retention, review, and reuse. Governance ensures that multilingual data can support training, evaluation, and deployment without exposing sensitive content or uncontrolled data flows.

Sources: Slator on Pangeanic crossing 10 billion aligned data segments; Pangeanic NTEU project information; OPUS open parallel corpus collection; and OPUS research on aligned multilingual datasets. Slator, NTEU, OPUS, OPUS research.

What makes a parallel corpus useful as an operational training asset?

A parallel corpus becomes useful as an operational training asset when translated text is aligned, verified, cleaned, labeled, documented, and prepared for a specific model or workflow. Its value depends not only on the number of segments, but also on alignment quality, domain fit, language coverage, metadata, provenance, and safe reuse in production systems.

Pangeanic structures parallel corpora as part of a wider multilingual data operation. This includes corpus sourcing, translation memory cleaning, bilingual alignment, linguistic review, terminology control, metadata preparation, data governance, and integration into machine translation, multilingual AI, and evaluation workflows. This operational approach has supported large corpus initiatives, including Pangeanic’s 10 billion alignment milestone and European projects such as NTEU.

Alignment quality

Reliable parallel corpora depend on precise alignment between the source text and the target text. Sentence level and phrase level matching must preserve meaning, terminology, structure, and context, especially when the data will be used to train or evaluate translation systems.

Linguistic verification

Human review remains important because aligned data can still contain omissions, mistranslations, formatting noise, duplicated content, or inconsistent terminology. Linguistic verification helps turn raw bilingual material into dependable training evidence.

Scale and availability

Effective multilingual AI requires volume, but useful parallel data remains scarce in many languages and domains. Corpus operations therefore need sourcing, cleaning, deduplication, and controlled expansion across the language pairs and sectors that matter to the model.

Domain relevance

A corpus for legal contracts, medical content, software strings, public administration, or customer support cannot be treated as interchangeable text. Domain relevance determines whether a model learns useful translation behavior or generic language patterns.

Linguistic diversity

Translation data should reflect language variation across regions, registers, document types, and use cases. This helps models handle multilingual environments where the same language can vary by market, audience, dialect, and professional context.

Operational governance

Corpora used in production systems require clear rules for provenance, access, filtering, retention, review, and reuse. Governance ensures that multilingual data can support training, evaluation, and deployment without exposing sensitive content or uncontrolled data flows.

Sources: Slator on Pangeanic crossing 10 billion aligned data segments; Pangeanic NTEU project information; OPUS open parallel corpus collection; and OPUS research on aligned multilingual datasets. Slator, NTEU, OPUS, OPUS research.

Parallel Corpora delivered at industrial scale

Trusted by global technology leaders for mission-critical multilingual data operations at scale

Microsoft

Pangeanic created over 50 million aligned segments across more than 20 languages, with a strong focus on low-resource linguistic environments. The project combined scale, linguistic precision and accelerated execution timelines to support multilingual AI model training at production level.

Confidential

For a Top-10 NASDAQ company, across 27 languages and multiple operational domains, Pangeanic developed 45+ million segments tailored for multilingual AI and translation model development. The contribution strengthened linguistic consistency for millions of users of this critical translation system

Amazon

Pangeanic generated more than 10 million aligned segments across two languages, demonstrating high linguistic depth and operational rigor within narrowly defined language pairs. The project reflected the company’s capacity to sustain quality and consistency at scale across all steps of parallel corpora creation.

FAQ

Parallel corpora for LLMs, machine translation, and multilingual AI

These questions explain how parallel corpora are collected, evaluated, filtered, and used in modern language systems. The same principles apply to machine translation, multilingual language models, retrieval workflows, evaluation pipelines, and governed enterprise AI systems.

What metrics are used to evaluate the quality of a parallel corpus?

Parallel corpus quality is evaluated through alignment accuracy, language identification, noise detection, duplicate removal, domain consistency, terminology fidelity, lexical coverage, and downstream translation performance. For machine translation, reference using metrics such as BLEU and neural metrics such as COMET can be used alongside human review to assess whether the corpus improves translation quality in real tasks.

How do neural methods use parallel corpora for representation learning?

Neural models use parallel corpora to learn how meaning is expressed across languages. Parallel sentences can support translation objectives, contrastive learning, multilingual embeddings, and translation language modeling. This helps models connect equivalent meanings across languages and improves transfer to multilingual search, classification, translation, and generation tasks.

What are the main challenges in collecting parallel data at scale for LLM training?

The main challenges are scarcity, noise, alignment errors, wrong language pairs, duplicated content, boilerplate, inconsistent terminology, and uneven domain coverage. Data mined from the web can provide volume, but it usually requires language detection, sentence matching, filtering, deduplication, human review, and domain balancing before it becomes reliable training data.

Why are parallel corpora useful for LLMs if LLMs are not only translation systems?

Parallel corpora help LLMs learn multilingual equivalence, terminology consistency, and meaning preservation across languages. They are useful for translation, multilingual instruction tuning, retrieval across languages, evaluation datasets, preference data, domain adaptation, and controlled generation in regulated multilingual environments.

How does Pangeanic differentiate in building parallel corpora?

Pangeanic structures parallel corpora as governed multilingual data pipelines rather than static bilingual datasets. The process combines controlled sourcing, alignment, data cleaning, human linguistic review, terminology validation, metadata preparation, governance, and delivery into machine translation, LLM, and evaluation workflows. This approach builds on Pangeanic’s industrial corpus experience, including its 10 billion alignment milestone and EU projects such as NTEU.

When should an enterprise build its own parallel corpus?

An enterprise should build or curate its own parallel corpus when generic translation systems fail to reflect its terminology, risk profile, privacy needs, document types, or regional language requirements. Private corpora are especially useful for legal, medical, financial, government, technical, customer support, and regulated content.

Sources: Slator on Pangeanic crossing 10 billion aligned data segments; Pangeanic NTEU project information; COMET research on neural machine translation evaluation; XLM research on translation language modeling; LASER research on multilingual sentence representations; and ParaCrawl information on large scale parallel corpus mining. Slator, NTEU, COMET, XLM research, LASER research, ParaCrawl.

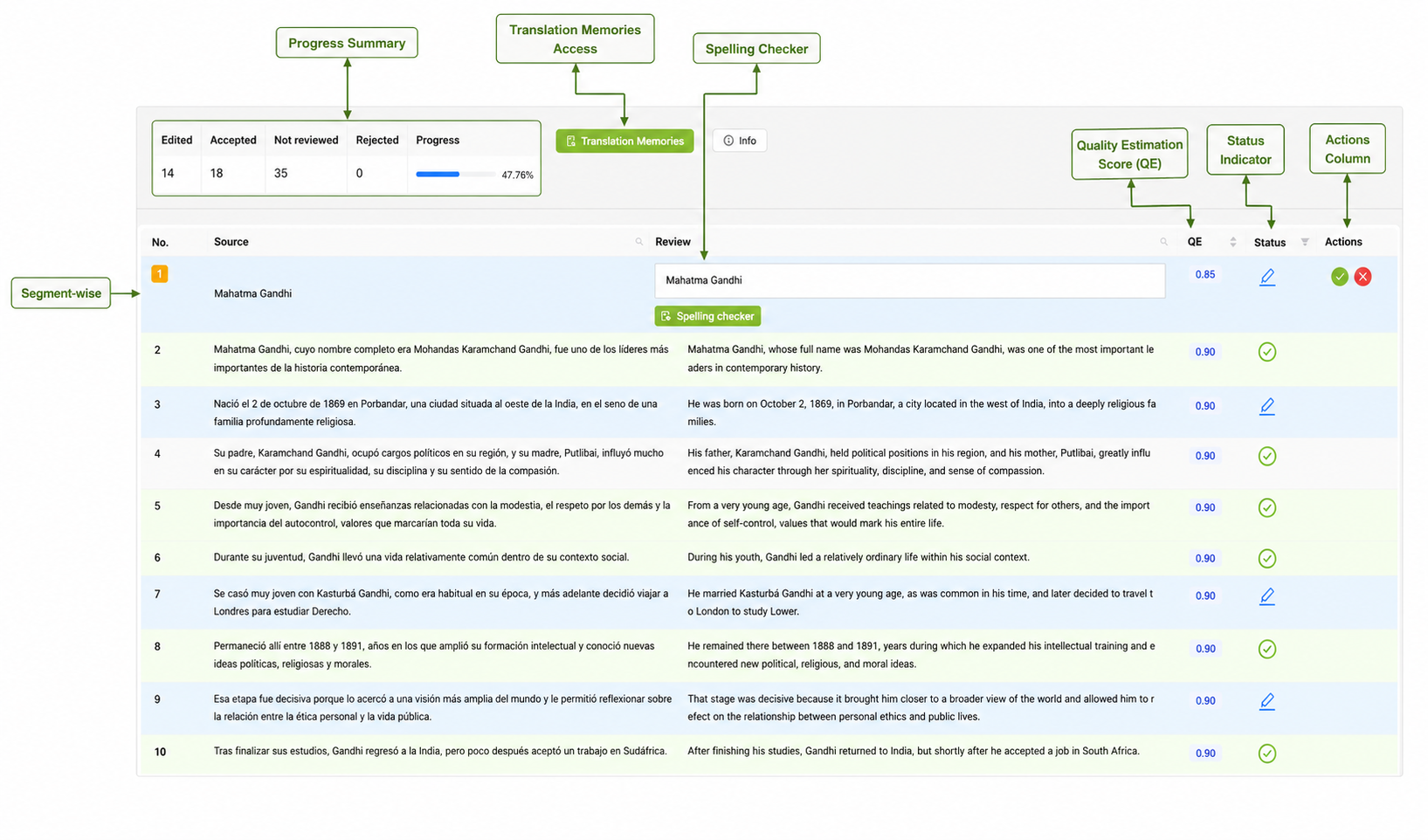

Parallel corpora dataset creation and management with PECAT

PECAT is Pangeanic’s platform for managing multilingual and multimodal data operations. For parallel corpora, it supports controlled workflows where bilingual data sourcing, segment alignment, annotation, quality review, terminology validation, and governance are managed as part of one operational process.

This matters because parallel corpus creation is not only a translation task. It is a data engineering and linguistic validation workflow where every segment must remain traceable, reviewable, and usable for machine translation, LLM adaptation, evaluation, and multilingual AI deployment.

Parallel data collection

Pangeanic uses PECAT to coordinate the acquisition, preparation, and control of bilingual and multilingual data for parallel corpus creation. The platform helps structure data intake across languages, domains, formats, and project requirements.

- Collection of multilingual content from curated, proprietary, and institutional sources

- Data sourcing for legal, medical, technical, public sector, and enterprise content

- Preparation of bilingual data for translation memory, corpus, and model workflows

- Controlled ingestion with project dashboards, supplier management, and production tracking

- Secure handling of sensitive content, including anonymization workflows when required

Alignment, annotation, and review

PECAT supports the refinement layer where source and target segments are aligned, checked, annotated, and prepared for use in machine translation and multilingual AI systems. Automated checks and expert human review work together to preserve meaning, terminology, and dataset integrity.

- Sentence and segment alignment across language pairs

- Terminology, metadata, and tag enrichment for corpus control

- Glossary and regular expression support for improved labeling accuracy

- Human review combined with automated quality checks and AutoQA controls

- Quality reports to support traceability, auditability, and continuous improvement

Sources: Pangeanic PECAT platform information and Pangeanic text annotation information. PECAT platform, text annotation services.

Quality governed by recognized operational standards

Pangeanic’s data and language operations are supported by certified management systems for quality, translation services, information security, medical device quality processes and human review of machine translation output. These standards help ensure that PECAT workflows remain consistent, secure, auditable and reliable in production environments.

Sources: ISO information on quality management, translation services, information security, medical device quality management and full human review of machine translation output. ISO 9001, ISO 17100, ISO IEC 27001, ISO 13485, ISO 18587.

PARALLEL CORPORA DATA LAKE

Parallel corpora at scale for multilingual AI and machine translation

Pangeanic provides large scale parallel corpora and multilingual AI training datasets through a repository of more than 10 billion aligned segments, together with custom data operations tailored to specific model, domain and language requirements. Each project is structured around data quality, linguistic accuracy, terminology control and governed delivery for production use.

Explore related datasets

Multilingual data collection, human review, structured metadata and governed delivery pipelines designed for enterprise and public sector production environments.

9 min read

Tokens are the new coal… for “Captive AI”?

Manuel Herranz: May 10, 2026

7 min read

Best AI Training Data Providers in 2026

Yash Dhobale: May 2, 2026