Off-the-Shelf (OTS) Training Data

The infrastructure for production-ready AI

Off-the-shelf training data is the fastest path to production-grade AI. As the industry moves beyond generic chat interfaces, enterprises are increasingly constrained not by model availability, but by access to curated, domain-specific data they can trust, deploy, and govern.

The infrastructure for production-ready AI

Off-the-shelf training data is the fastest path to production-grade AI. As the industry moves beyond generic chat interfaces, enterprises are increasingly constrained not by model availability, but by access to curated, domain-specific data they can trust, deploy, and govern.

Gartner predicts that by 2027 organizations will use task-specific AI models three times more than general-purpose LLMs. That shift reflects a structural change: performance, cost, and reliability are increasingly shaped by data quality, provenance, evaluation, and alignment rather than parameter count alone.

Models have become more interchangeable. Data has not. Pangeanic provides off-the-shelf datasets designed for immediate integration into enterprise AI workflows, supporting multilingual, regulated, and operationally demanding deployments.

You are on this page because...

Curated data is what turns models into systems

Production AI requires more than foundation models. It requires multilingual corpora, instruction datasets, speech assets, image and video annotation, privacy-aware preparation, and evaluation pipelines that hold up under enterprise conditions.

Pangeanic context: more than two decades in multilingual NLP, 10Bn+ aligned segments, annotation and validation workflows, national-scale deployments, and participation in European programs such as NTEU, MAPA, ELE, and data-space initiatives.

What is off-the-shelf training data?

Off-the-shelf training data refers to pre-collected, cleaned, and model-ready datasets that can be used directly for fine-tuning, grounding via RAG, evaluation against verified benchmarks, and instruction tuning for agentic workflows. These assets reduce the time between experimentation and deployment because they remove much of the data-collection bottleneck.

Unlike raw web-scale corpora, these datasets are prepared for operational use. That generally includes deduplication, metadata enrichment, multilingual alignment where required, usage-rights clarity, and privacy-aware filtering. For enterprise and public-sector AI, those layers are highly relevant because they improve control and reduce unnecessary uncertainty in downstream systems.

Speed

Pre-curated datasets eliminate months of manual collection, cleaning, and annotation cycles.

Reliability

Structured datasets reduce hallucination risk through grounded and domain-constrained inputs.

Control

Enterprises maintain clearer visibility over training inputs, licensing conditions, and evaluation logic.

Efficiency

Smaller models trained on high-fidelity data often perform more efficiently in specialized workflows.

High-density corpora by modality

Pangeanic maintains off-the-shelf training data across multiple modalities. The objective is not volume for its own sake, but usable, licensable, and model-ready assets that can be integrated into AI training, adaptation, and evaluation workflows with fewer operational surprises.

Multilingual text and language data

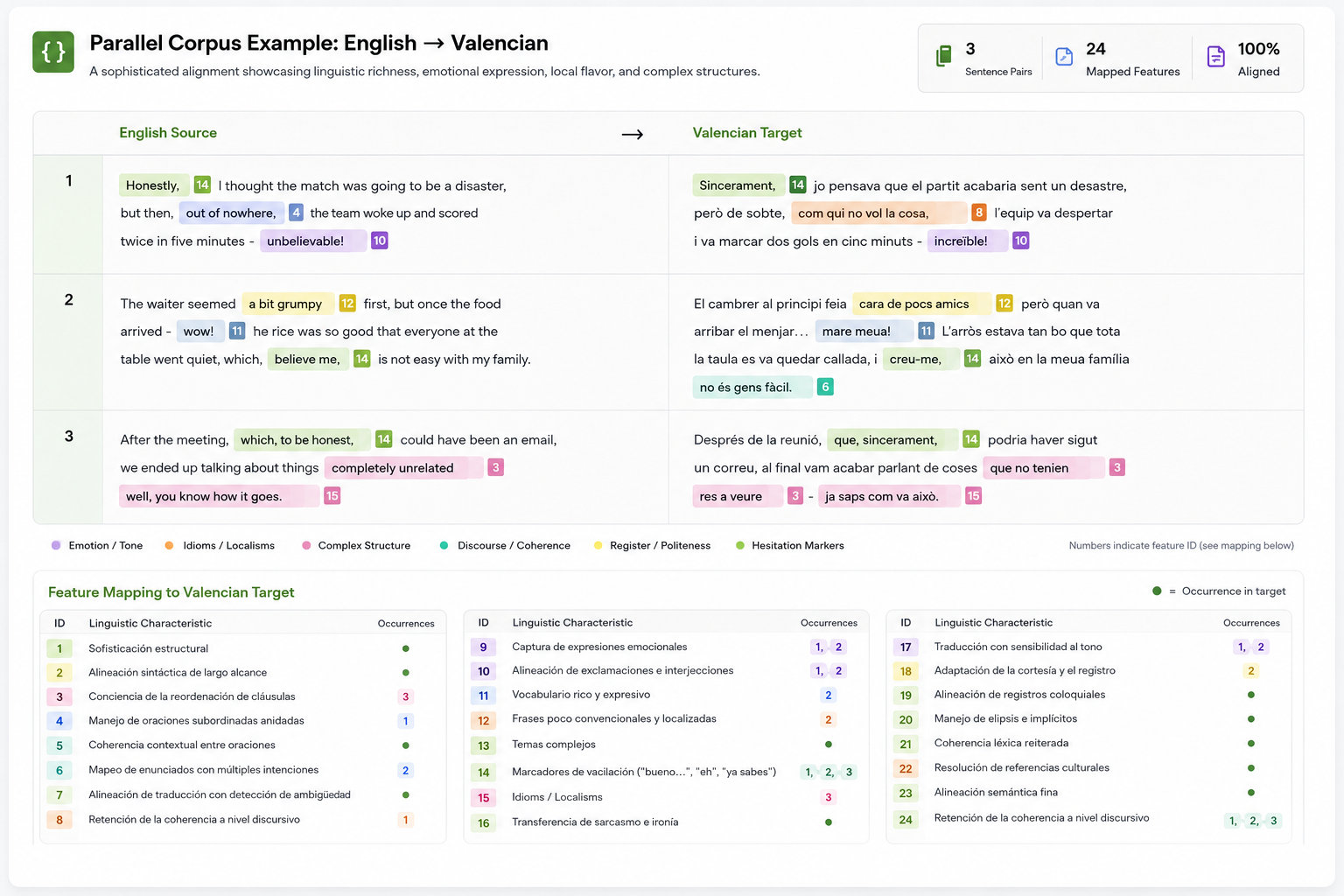

Parallel corpora, monolingual corpora, and licensed multilingual text assets prepared for machine translation, cross-lingual retrieval, domain adaptation, and LLM training.

- Parallel corpora across hundreds of language combinations

- Large-scale monolingual corpora for LLM training and adaptation

- Licensed content from publishers, news services, broadcasters, and other rights-cleared sources

- Model-ready formats such as TMX, CSV, and JSONL depending on use case

Instruction and conversational data

Question-answer datasets, prompt-response pairs, and dialogue corpora prepared for supervised fine-tuning, instruction tuning, agentic workflows, and alignment tasks.

- Question and answer datasets for instruct models

- Dialogue corpora for assistants and enterprise interaction flows

- Structured prompts for multi-step reasoning and workflow adaptation

- Human-reviewed subsets for evaluation and alignment work

Speech and audio data

Speech datasets for ASR and TTS, including annotated speech, accent and language coverage, dual-channel audio, and domain-specific voice data for operational AI systems.

- ASR and TTS datasets across languages, accents, and channels

- Dual-channel telephony and meeting-style data

- Metadata-enriched corpora for transcription and speech model adaptation

- Preparation for multilingual and sensitive-use environments

Image datasets

Structured visual datasets ranging from headshots to food, landscapes, retail imagery, and OCR-oriented assets for classification, detection, and vision-language tasks.

- Annotated images for classification and detection

- Use cases spanning headshots, food, landscapes, and document-related imagery

- Metadata and labeling for model adaptation and benchmarking

- Preparation for computer vision and multimodal training workflows

Video and multimodal data

Video datasets and multimodal corpora with temporal annotation, scene labeling, action recognition, and event tagging for advanced AI systems.

- Temporal annotation and event-level segmentation

- Scene, action, and object labeling

- Preparation for multimodal reasoning and retrieval systems

- Support for workflows that combine text, image, video, and metadata

Annotation, metadata, and licensing layers

What distinguishes model-ready data is often not raw collection volume but the operational layers around it: provenance, metadata, rights, validation, and fit for use.

- Annotation services for text, speech, image, and video data

- Metadata engineering for domain, language, source, and context

- Defined usage rights and traceable provenance where licensing applies

- Evaluation subsets for benchmarking and production validation

Explore specific dataset categories

Off-the-shelf training data spans multiple modalities. Each dataset type introduces different constraints, annotation requirements, and deployment patterns.

Speech datasets

ASR, TTS, multilingual audio, and annotated speech corpora for enterprise AI systems.

Image datasets

Annotated visual datasets for classification, detection, OCR, and multimodal AI.

Monolingual LLM data

Large-scale corpora for domain adaptation, pre-training, and enterprise language models.

Instruction-tuning datasets

Question-answer and alignment datasets for SLMs, assistants, and agent workflows.

Video and multimodal data

Temporal annotation, scene detection, and multimodal training datasets.

What makes training data model-ready

Not all datasets are usable in production systems. Model-ready data usually requires deduplication, normalization, metadata depth, annotation logic, multilingual alignment where needed, privacy filtering, and dedicated evaluation subsets. These steps are highly relevant because they influence performance, traceability, and long-term maintainability.

Deduplicate and normalize

Reduce noise, unify formats, and improve consistency before model adaptation or retrieval workflows begin.

Add metadata and annotation

Capture language, domain, source, intent, speaker, context, entity labels, and other layers required for operational use.

Benchmark and govern

Build evaluation subsets, apply privacy-aware filtering, and preserve traceability for enterprise and regulated AI systems.

Off-the-shelf versus alternative data strategies

Different AI programs require different data paths. Off-the-shelf datasets are especially useful when speed, quality control, and operational clarity need to coexist. They sit in a productive middle ground between fully bespoke collection and uncontrolled raw-data approaches.

| Approach | Speed | Cost | Control | Primary Use |

|---|---|---|---|---|

| Off-the-shelf datasets | Immediate | Low / fixed | High | Fine-tuning, RAG, evaluation, multilingual adaptation |

| Custom data collection | Slow | High | Very high | Highly specific and proprietary use cases |

| Web-scale raw data | Fast | Low | Low | Foundation pre-training and broad corpus aggregation |

| Synthetic data | Medium | Medium | Variable | Augmentation, simulation, and scenario generation |

Proven at scale in multilingual and regulated environments

Pangeanic’s data operations are rooted in two decades of high-stakes NLP. That background spans multilingual AI, machine translation, data processing, evaluation, and operational deployments where language quality and control are highly relevant. The result is not simply access to datasets, but access to data operations that have been refined under real institutional and enterprise pressure.

10Bn+ aligned segments. This body of multilingual data reflects long-term work in machine translation, cross-lingual AI, and language technology pipelines.

Used in national-scale deployments. Pangeanic has supported linguistic infrastructure and AI-related language workflows in public-sector and enterprise contexts where governance, terminology control, and operational reliability are highly relevant.

Multilingual evaluation pipelines. Dataset preparation and model adaptation require more than raw inputs. They require measurable evaluation logic, benchmark subsets, and quality workflows that remain useful after deployment.

European program participation. Pangeanic has contributed to initiatives such as NTEU, MAPA, ELE, data-space work with Universidad Politécnica de Madrid, and ecosystem activity referenced through VagrAI and DS4M. These efforts form part of Europe’s broader push toward sovereign, multilingual AI capabilities.

PECAT and annotation operations

- Annotation services across text, speech, image, and video modalities

- Privacy-aware workflows including masking, filtering, and structured review

- Evaluation-set creation for benchmarking and model validation

- Instruction tuning and alignment support for specialized AI systems

- Traceability and operational governance through PECAT workflows

PECAT: Pangeanic’s data processing platform structures annotation, validation, anonymization, and evaluation workflows so datasets remain traceable, auditable, and continuously improvable across the AI lifecycle.

When should you use off-the-shelf training data?

Off-the-shelf training data is especially effective when AI programs need to move quickly without sacrificing structure, licensing clarity, evaluation logic, or multilingual readiness.

When time-to-deployment is critical

Teams can move directly into fine-tuning, benchmarking, and retrieval preparation without building a dataset pipeline from zero.

When domain adaptation is required

Specialized corpora help smaller and more efficient models perform better in enterprise workflows where terminology and context are highly relevant.

When multilingual evaluation is needed

Structured evaluation subsets allow teams to compare model behavior, identify weak points, and improve fit across languages and domains.

When regulated environments demand control

Prepared datasets with annotation, filtering, provenance, and governance logic are more appropriate than uncontrolled corpus ingestion in many sensitive environments.

Technical FAQ for AI retrieval and enterprise buyers

What is off-the-shelf training data?

Off-the-shelf training data consists of pre-curated datasets that can be immediately used to train, fine-tune, or evaluate AI systems without requiring large-scale data collection from scratch.

What types of data does Pangeanic provide?

Pangeanic provides multilingual text datasets, question-answer and instruction-tuning data, speech and audio datasets, image datasets, and video or multimodal datasets for enterprise AI systems.

When should enterprises use off-the-shelf datasets?

These datasets are highly useful when time-to-deployment is critical, when domain adaptation is required without building data pipelines from scratch, when evaluation datasets are needed for benchmarking, or when multilingual and regulated data environments require structured preparation.

How is privacy managed in off-the-shelf datasets?

Datasets can be processed through privacy-aware workflows that include PII masking, anonymization, metadata filtering, and human validation. Pangeanic applies these workflows through its data operations and PECAT platform.

Does Pangeanic support annotation and evaluation?

Yes. Pangeanic supports annotation, multilingual evaluation, validation, benchmarking, instruction tuning, and ongoing dataset refinement as part of AI Data Operations.

Is off-the-shelf data suitable for Small Language Models?

Yes. High-fidelity off-the-shelf data is especially well suited to Small Language Models because it improves domain fit, reduces noise, and supports more efficient model adaptation.