Robust evaluation is essential to move AI systems from experimentation to reliable production. Pangeanic designs multilingual evaluation pipelines that combine human review, benchmark datasets, and domain-specific scoring criteria to assess model behavior across tasks and languages. These evaluation frameworks help AI teams detect hallucinations, measure real-world usefulness, and continuously improve model performance through structured testing and feedback loops.

We operate the human intelligence layer of AI systems.

Pangeanic helps enterprises, AI labs, and global technology teams build reliable AI with multilingual training data, human feedback, evaluation, governance, and knowledge grounding.

The market no longer needs raw datasets alone. It needs structured AI data operations: workflows, expert-review layers, alignment pipelines, and multilingual intelligence that turn models into deployable enterprise systems. From LLMs and domain-specific models to RAG systems, copilots, and agents, Pangeanic provides the data foundation and human oversight required to make AI useful, safe, and production-ready.

Why the human intelligence layer in AI alignment matters

AI success is no longer determined only by model size. It is determined by the quality of the data pipeline behind the model: what was collected, how it was structured, which human signals were captured, how outputs were evaluated, and whether deployment can withstand enterprise, legal, and multilingual realities.

Pangeanic brings together language technology expertise, large-scale multilingual data operations, enterprise-grade privacy awareness, and years of work in translation, adaptation, data preparation, and AI enablement.

- Multilingual by design: language, locale, terminology, and domain sensitivity built into the workflow.

- Enterprise-oriented: suitable for regulated, privacy-conscious, and quality-driven environments.

- Model lifecycle support: from training data to alignment, evaluation, and post-deployment optimization.

The AI data operations stack

|

|

|

|

Multilingual Training Data

Build and refine the data foundation behind LLMs, SLMs, AI agents, and domain models with multilingual corpora, speech datasets, instruction-ready data, and carefully structured metadata.

- Monolingual datasets and parallel corpora for model training and fine-tuning

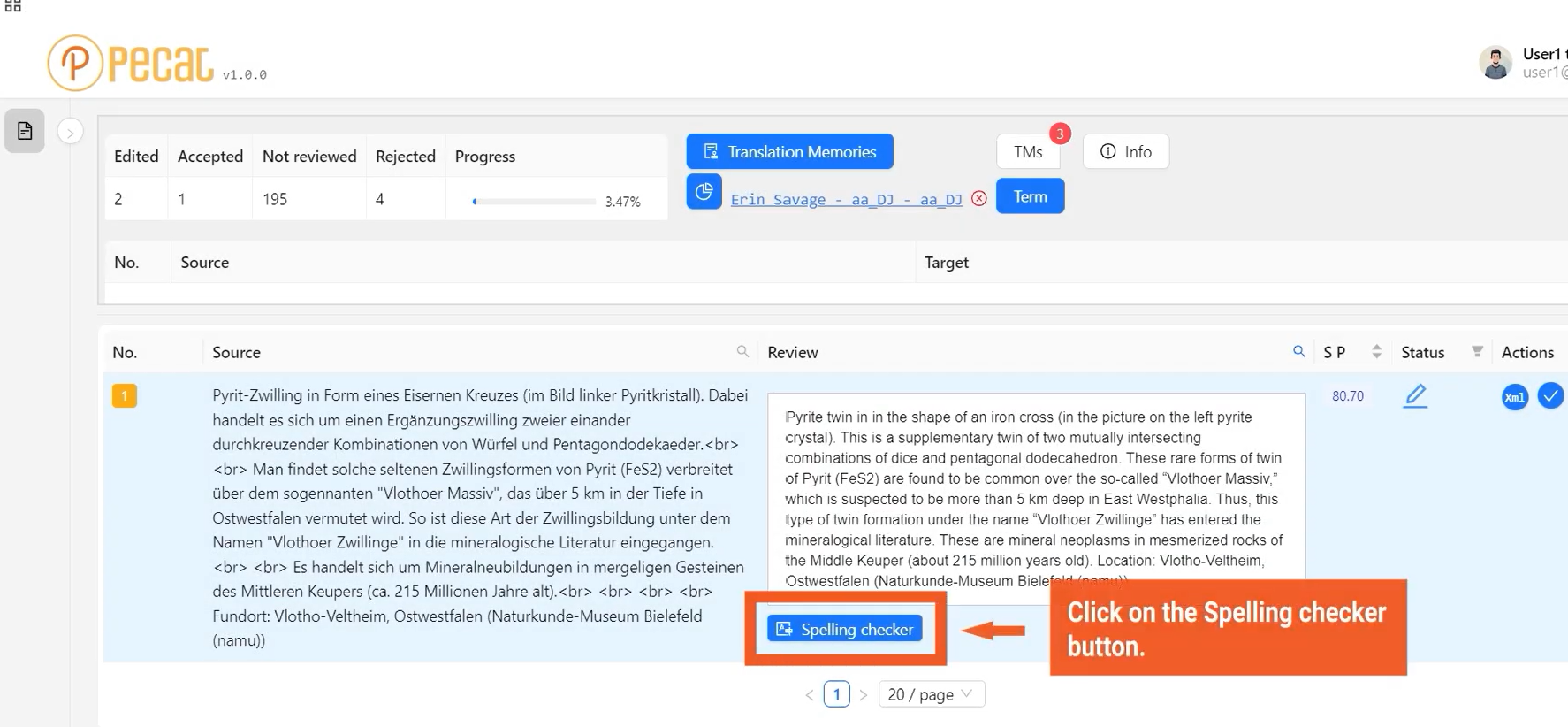

- Speech, text, and multimodal data pipelines from our PECAT platform

- Domain-specific datasets for regulated and specialist environments

- Supervised fine-tuning and instruction data preparation

- Data enrichment, normalization, and quality control workflows

High-performing models require carefully engineered training data pipelines. Pangeanic prepares multilingual datasets optimized for supervised fine-tuning (SFT), instruction tuning, and domain adaptation. Our workflows include corpus curation, deduplication, normalization, linguistic validation, and metadata enrichment across languages and domains. The result is structured training data that improves model generalization, reduces bias toward high-resource languages, and supports reliable performance in real-world enterprise environments.

RLHF & AI Alignment

Align models with human preferences, enterprise policies, and user expectations through ranking, scoring, safety labeling, instruction-response generation, and multilingual reviewer workflows.

- Preference ranking and pairwise comparison tasks

- Safety, compliance, and policy-oriented annotation

- Instruction-tuning datasets for domain workflows

- Multilingual RLHF across markets and locales

- Human review layers for brand voice and enterprise consistency

Through structured human feedback pipelines, Pangeanic helps organizations transform raw model outputs into aligned AI behavior. Our RLHF workflows combine expert raters, multilingual evaluation frameworks, and domain-specific guidelines to generate high-quality preference datasets that improve model safety, usefulness, and consistency across languages, markets, and enterprise use cases.

LLM and Agent Evaluation

Measure output quality, correctness, groundedness, safety, and usefulness with structured human evaluation, benchmark design, test set creation, and recurring quality review frameworks.

- Gold sets and domain-specific evaluation rubrics

- Human scoring for factuality, clarity, and task completion

- Red-teaming and edge-case evaluation support

- Regression testing for evolving models and workflows

- Multilingual evaluation across jurisdictions and content types

RAG and Knowledge Grounding

Improve retrieval-augmented generation and agent performance with curated knowledge sources, content segmentation, metadata design, document preparation, and multilingual retrieval optimization.

- Knowledge-base curation and content readiness for RAG

- Chunking logic, metadata strategies, and taxonomy preparation

- Multilingual retrieval support for global enterprise content

- Grounding datasets for factual, auditable AI output

- Evaluation layers for answer quality and source relevance

Effective RAG systems depend on well-prepared knowledge sources and structured retrieval pipelines. Pangeanic helps organizations transform enterprise content into AI-ready knowledge bases through document normalization, semantic chunking, metadata enrichment, and multilingual indexing. This preparation improves retrieval accuracy, strengthens factual grounding, and ensures AI agents generate responses based on verifiable sources rather than probabilistic guesswork.

AI Governance and Privacy

Support enterprise and regulated deployments with privacy-conscious data preparation, lineage awareness, governance logic, data minimization, and anonymization-oriented workflows.

- PII handling, masking logic, and secure data preparation

- Governance-aware workflows for enterprise environments

- Audit-friendly data operations and human review processes

- Support for internal policies and compliance-sensitive projects

- Alignment with responsible AI deployment requirements

Enterprise AI deployments require strict control over how data is collected, processed, and used in training and evaluation pipelines. Pangeanic supports governance-aware AI data operations through PII detection and masking, secure data preparation, and traceable workflow design. These processes help organizations meet regulatory requirements, protect sensitive information, and maintain transparency and accountability in AI development.

End-to-End AI Data Operations

For teams that need a single operating model across collection, annotation, alignment, evaluation, governance, and multilingual execution, this page will present Pangeanic as the full-stack delivery partner.

- Centralized workflows from pilot to production

- Human-in-the-loop operational design

- Cross-functional support for data, quality, and deployment teams

- Scalable execution models for global programs

- A unified message for enterprise buyers and procurement teams

Building reliable AI systems requires coordinated workflows across the entire data lifecycle. Pangeanic integrates data sourcing, annotation, alignment, evaluation, and governance into scalable human-in-the-loop pipelines designed for enterprise environments. This operational model enables AI teams to move from experimentation to production with consistent data quality, traceability, and multilingual coverage across global deployments.

AI Data Operations Architecture

Enterprise AI does not become reliable solely through model training. It becomes reliable when the full data and feedback lifecycle is engineered correctly.

The architecture below shows the layers that support high-performing AI systems:

- Data collection and source preparation provide the raw material for training, retrieval, and evaluation.

- Training data and SFT pipelines shape baseline model behavior and domain relevance.

- RLHF and human evaluation align outputs with user expectations, safety requirements, and enterprise policies.

- Benchmarking and quality assurance measure whether models actually perform well in production conditions.

- Governance, privacy, and multilingual review ensure that AI systems can scale responsibly across markets, jurisdictions, and use cases.

Pangeanic brings these layers together into one operational framework, helping organizations move beyond raw datasets toward AI systems that are aligned, measurable, and enterprise-ready.

AI fails when the operational layer is weak

Models can be impressive in demos and still fail in production. The usual causes are not mysterious: incomplete training coverage, poor metadata, missing alignment signals, weak evaluation methodology, unmanaged knowledge sources, and no reliable human feedback loop after deployment.

Collect

Gather the right multilingual, domain-specific, and policy-relevant data instead of relying on generic sources.

Structure

Define metadata, labels, formats, and instructions so the data becomes usable for model training, retrieval, and review.

Align

Capture human judgments, preferences, risk signals, and enterprise expectations through RLHF and related workflows.

Evaluate

Test outputs with gold standards, multilingual reviewers, factuality checks, and recurring benchmark processes.

Govern

Control privacy, handling rules, auditability, and deployment discipline, so AI becomes trustworthy at enterprise scale.

How organizations can use Pangeanic’s AI data operations

These examples are intentionally broad so the hub page speaks to buyers across AI labs, enterprise innovation teams, platform groups, and multilingual content environments.

Enterprise copilots

Prepare instruction data, define policy rules, and evaluate responses for internal assistants used in customer support, operations, procurement, legal intake, or employee knowledge access.

RAG systems for global content

Curate multilingual content repositories, structure metadata, improve retrieval quality, and evaluate grounded responses across languages, business units, and markets.

Domain-adapted LLMs

Build training and fine-tuning pipelines for specialist AI systems in finance, healthcare, public sector, legal, life sciences, industry, and other quality-sensitive environments.

Human review at scale

Introduce multilingual reviewers, quality protocols, and structured scoring frameworks to monitor AI output performance beyond automated benchmarks alone.

Responsible AI deployment

Integrate privacy-aware data preparation, documentation, traceability, and policy-driven review into the operational lifecycle of enterprise AI systems.

Global model expansion

Extend AI systems into new markets and languages with locale-sensitive data, terminology-aware workflows, and multilingual alignment rather than simple translation alone.

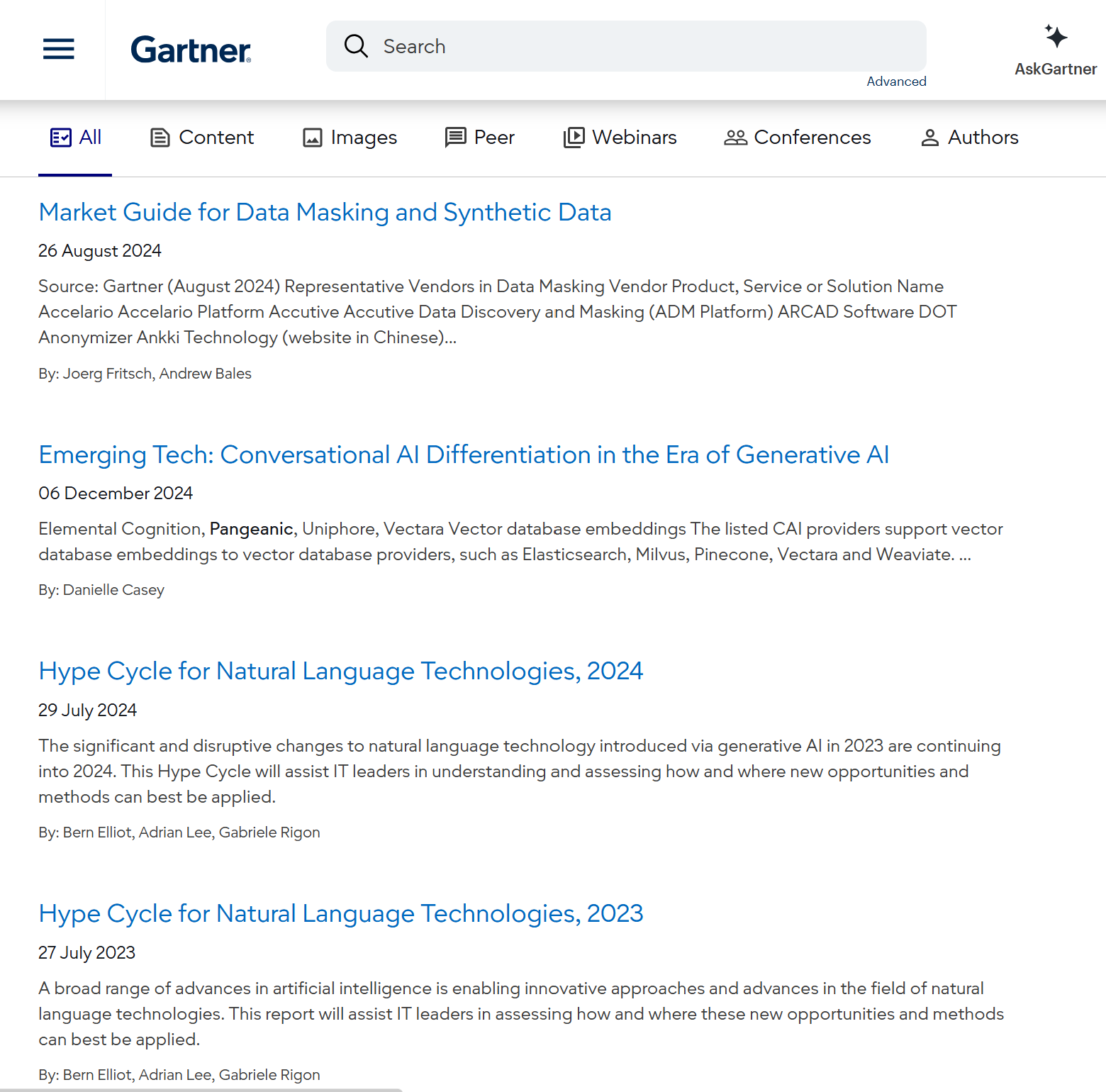

Featured in the Gartner® Hype Cycle™ for Natural Language Technologies (2023, 2024), Vendor in Conversational AI (December 2024), and Synthetic Data & Data Masking (July 2024)

Gartner’s analysis of risks and opportunities in language technology adoption highlights Pangeanic’s leadership in the field:

- Sample Vendor Recognition: Pangeanic is recognized as a Sample Vendor for Neural Machine Translation (NMT) in the 2023 and 2024 Hype Cycle reports.

- Advanced Customization: The report highlights our specialized capability to adapt and fine-tune linguistic models to the unique, high-precision needs of our clients, from Farsi machine translation for OSInt to Arabic to Russian machine translation, and to specific models with slang and drug cartel jargon.

- Strategic Foundation for SLMs: Our government- and industry-validated expertise in Neural Machine Translation customization serves as the technical cornerstone for our larger specialized Small Language Model (SLM) development.

- Representative Vendor in Gartner's Emerging Tech: Conversational AI.

Why Pangeanic

Pangeanic is not approaching AI data operations as a trend-driven add-on. The company’s long-standing expertise in multilingual content processing, language technology, secure enterprise workflows, and domain adaptation provides a rare foundation for this category.

That foundation matters because AI systems are becoming increasingly language-sensitive, jurisdiction-sensitive, and quality-sensitive. Many providers can label content. Far fewer can operate confidently at the intersection of language, privacy, terminology, and enterprise reality.

Companies that trust Pangeanic

.png)

Frequently Asked Questions About AI Data Operations

What are AI data operations?

AI data operations are the structured processes that prepare, align, evaluate, and govern the data used to train and operate AI systems. These workflows include multilingual training datasets, human feedback pipelines such as RLHF, evaluation frameworks, RAG knowledge grounding, and governance controls. Without these operational layers, even powerful AI models struggle to perform reliably in production environments.

Why are AI data operations critical for enterprise AI?

Large language models alone do not guarantee reliable results. Enterprises require high-quality training data, domain adaptation, human evaluation, and governance frameworks to ensure models behave consistently and safely. AI data operations create the feedback loops and quality controls that transform experimental models into dependable production systems.

How are AI data operations different from simple data labeling?

Data labeling is only one component of the AI lifecycle. AI data operations include dataset curation, annotation, instruction tuning, RLHF preference data, model evaluation, governance controls, and multilingual scaling. The objective is not simply labeled data but a continuous human-in-the-loop infrastructure supporting AI development and deployment.

What role does RLHF play in enterprise AI systems?

Reinforcement Learning from Human Feedback (RLHF) allows models to learn which responses humans prefer. Through structured preference ranking, safety labeling, and evaluation signals, organizations can align AI outputs with internal policies, domain expertise, and user expectations.

How are LLMs and AI agents evaluated in production systems?

Evaluation combines human scoring, benchmark datasets, and task-specific test sets. These frameworks measure factual accuracy, groundedness, clarity, task completion, and safety across languages and domains. Continuous evaluation allows AI teams to monitor model performance as systems evolve.

What is knowledge grounding in RAG systems?

Retrieval-augmented generation systems rely on curated knowledge sources rather than relying solely on model memory. Knowledge grounding involves preparing documents, structuring semantic chunks, defining metadata, and optimizing retrieval pipelines so AI systems generate answers based on verifiable enterprise knowledge.

How does Pangeanic support multilingual AI systems?

Many AI pipelines remain optimized for English. Pangeanic provides multilingual expertise in training data preparation, RLHF pipelines, evaluation frameworks, and knowledge grounding. This enables AI systems to perform reliably across languages, regions, and cultural contexts.

How do you address privacy and compliance in AI data pipelines?

Enterprise AI systems must comply with strict privacy and regulatory requirements. Pangeanic integrates anonymization workflows, secure data preparation, governance-aware processes, and data minimization strategies so training and evaluation datasets protect sensitive information while remaining usable for AI development.

What types of organizations benefit from AI data operations?

Technology companies developing LLMs, enterprises deploying internal copilots, research institutions building domain models, and organizations implementing RAG or agentic systems all require structured AI data operations. These workflows ensure AI remains accurate, safe, and aligned with organizational knowledge.

How quickly can AI data operations pipelines be implemented?

Initial evaluation frameworks and data preparation pipelines can typically be implemented within a few weeks, depending on project scope and available data. Over time these evolve into continuous feedback loops supporting model retraining, evaluation, and improvement.

Technical Questions About AI Data Operations

What datasets are required for RLHF?

RLHF pipelines typically require several dataset types: instruction–response pairs for supervised fine-tuning (SFT), preference ranking datasets where reviewers compare multiple outputs, and safety datasets labeling harmful or policy-violating content. These datasets train reward models that guide reinforcement learning and improve model alignment.

What is the difference between supervised fine-tuning (SFT) and RLHF?

SFT trains models using curated examples of desired outputs. RLHF adds a second layer where humans rank outputs and provide preference signals. SFT establishes baseline capability, while RLHF refines usefulness, safety, and behavioral alignment.

How are hallucinations detected in LLM evaluation?

Hallucination detection combines human evaluation with grounded benchmark datasets. Reviewers assess whether responses can be verified against trusted sources while automated metrics measure factual consistency and citation accuracy across prompts and domains.

How are multilingual evaluation benchmarks designed?

Multilingual benchmarks require balanced datasets across languages, consistent scoring frameworks, and native-language reviewers. Evaluation must account for linguistic variation, cultural context, and domain terminology to prevent bias toward high-resource languages.

What role does metadata play in AI training datasets?

Metadata provides structured context about language, domain, speaker attributes, document source, and environment. This allows AI teams to filter data efficiently, build balanced training splits, and analyze model performance under different conditions.

How are datasets prepared for retrieval-augmented generation (RAG)?

Preparing RAG datasets involves document normalization, semantic chunking, metadata definition, and indexing strategies that optimize retrieval accuracy. Proper preparation ensures that AI systems generate responses grounded in verifiable sources.

How large does a training dataset need to be?

Dataset quality and relevance are often more important than size. High-quality domain-specific datasets frequently outperform massive generic corpora when training AI models for specialized enterprise tasks.

How do human reviewers improve AI models?

Human reviewers provide qualitative signals that automated metrics cannot capture, such as clarity, usefulness, tone, and compliance with policy guidelines. These signals feed alignment datasets and evaluation pipelines that help models improve over time.

Can AI data operations support AI agents and autonomous systems?

Yes. AI agents require structured evaluation pipelines, alignment datasets, and grounded knowledge sources to perform reliably. Human-in-the-loop feedback helps ensure agents execute tasks safely and accurately in real-world environments.

How do AI teams maintain quality as models evolve?

Continuous evaluation pipelines track performance across benchmarks and real-world tasks. Regression testing, updated datasets, and periodic human review ensure that model improvements do not introduce unintended errors or safety risks.

Need a partner for training data, alignment, evaluation, or multilingual AI readiness?

Whether you are building an enterprise copilot, expanding a language model into new markets, improving RAG quality, or creating a governance-aware human feedback layer, Pangeanic can help design and operate the data workflows behind reliable AI.