Data Annotation solutions for Production-Grade AI environments

PECAT Data Annotation Platform

PECAT is not a generic labeling tool. It is an operational layer for managing multilingual data annotation, human review and evaluation workflows in environments where quality, traceability and governance are not optional.

Data annotation as an operational discipline

Most annotation platforms focus on interface efficiency. PECAT addresses a different constraint: how to sustain data quality across languages, domains and human reviewers over time.

The challenge is not labeling data once, but maintaining consistency, auditability and evaluation logic as models evolve. This is where most AI systems begin to degrade.

Task-specific language models are redefining how AI data must be built

The rapid rise of smaller, task-specific language models introduces a different constraint. According to Gartner, by 2027 organizations will deploy small, task-specific models at volumes at least three times greater than general-purpose large language models. Performance is no longer primarily driven by scale alone, but by the precision of training signals, the structure of feedback loops, and the consistency of evaluation data across iterations.

Instruction datasets, preference rankings, domain-specific corpora and multilingual edge cases introduce a combinatorial layer of complexity. Annotation evolves from labeling static datasets into managing continuous learning processes.

From labels to signals

Annotation evolves into structured human feedback, including ranking outputs, correcting reasoning paths and encoding domain preferences.

Continuous refinement

Data pipelines must support retraining cycles, evaluation datasets and alignment updates without compromising consistency.

Multilingual complexity

Smaller models expose linguistic gaps faster, requiring controlled annotation across languages, dialects and specialized domains.

Engineered with discipline. Designed for the future.

PECAT is Pangeanic’s data annotation and orchestration platform, designed to manage multilingual and multimodal AI data workflows from collection to evaluation. It structures how human input becomes training signal, embedding quality control, feedback loops and traceability into every stage of the lifecycle. Built for production environments, it combines human expertise, secure data handling and governance to ensure AI systems remain reliable as they evolve.

Multilingual consistency

Annotation guidelines and outputs remain coherent across languages, including low-resource and regulated-domain contexts.

Human review at scale

Expert validation, disagreement handling and iterative feedback loops integrated into the workflow rather than added later.

Traceability by design

Every annotation decision is auditable, enabling reproducibility, compliance and model evaluation over time.

Evaluation-ready data

Datasets are structured not only for training, but for benchmarking, comparison and continuous alignment.

Workflow orchestration

Task routing, reviewer layers and quality control pipelines adapted to domain complexity and risk profile.

Secure deployment

Designed for environments where data cannot leave controlled infrastructure, including public sector and enterprise AI systems.

Applied AI workflows with PECAT

Categorize segments or paragraphs by applying agreement between annotators and being able to configure the levels and forms of classification according to the needs of your project.

Label datasets with fully configurable tags based on your needs. In addition, improve the quality and accuracy of your project by applying inter-annotator agreement.

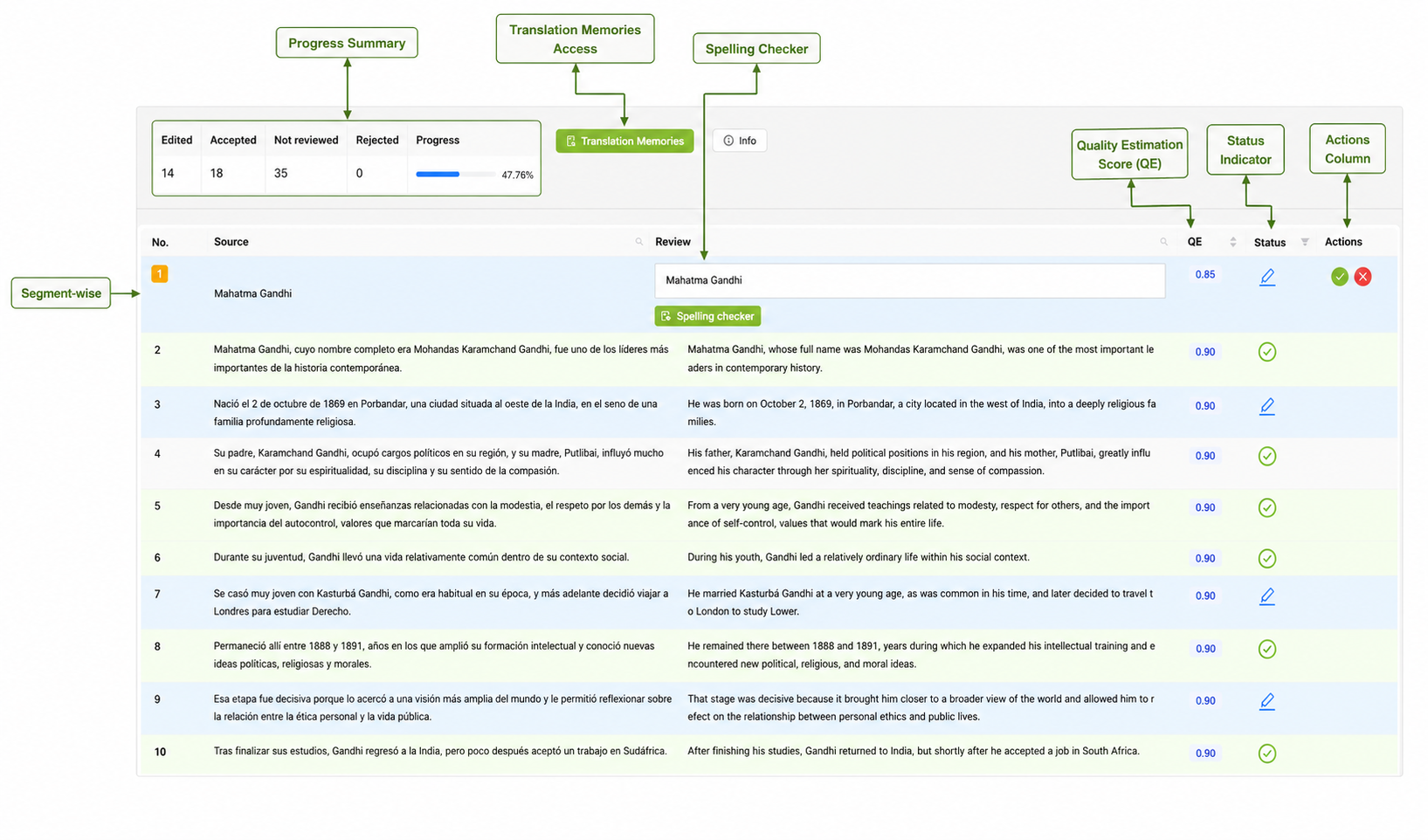

Create aligned multilingual datasets for machine translation and cross-lingual AI systems. Apply QA and post-editing procedures with in-domain neural machine translation engines.

Annotation of scripted and unscripted audio recording, speaker diarization and acoustic event labeling. Train robust STT and NLU models with clean, diverse, and scalable AI training data.

Convert speech to text for dataset creation, model training and evaluation workflows. Achieve scalable, high-accuracy audio-to-text data annotation critical for training performance of speech systems.

Prepare and structure datasets for training, fine-tuning and evaluating large language models. Get more accurate, relevant results with massive, curated datasets and human interaction (human-in-the-loop).

From annotation workflows to operational AI systems

PECAT has been deployed in environments where multilingual data, human feedback and evaluation pipelines required consistency, traceability and production-grade control.

Barcelona Supercomputing Center

Delivered data annotation, RLHF workflows and evaluation datasets supporting large language model training and experimentation. PECAT structured human-in-the-loop quality control, ensuring consistency and rigor across multilingual datasets. The collaboration with BSC’s Language Technologies Unit contributed to ongoing work in language models, translation and NLP research.

Amazon multilingual corpus project

Built a multilingual corpus of idiomatic expressions across languages and cultural contexts. The project was executed in PECAT through coordinated workflows between internal teams and external linguists. It ensured linguistic nuance, annotation consistency and scalability for downstream AI and language systems.

"Production-grade AI depends on more than just data and models. Pangeanic structures the workflows, validation, and feedback loops needed to keep multilingual systems accurate, measurable, and fit for regulated environments"

- Manuel Herranz, Pangeanic CEO

Data annotation in production environments

Why annotation quality determines AI performance?

Model performance is often attributed to architecture. In production environments, the limiting factor is usually data quality and evaluation design. Annotation defines both. It determines what the model learns and how it is judged. Poor annotation creates the illusion of progress while introducing silent failure modes.

How does human review change model outcomes?

Human review is often treated as a correction layer. In practice, it defines the learning signal. Expert validation, disagreement resolution, and preference ranking introduce nuance that automated pipelines cannot capture. This is particularly relevant in regulated or domain-specific contexts, where ambiguity carries operational risk.

Why must annotation be designed for evaluation from the start?

Datasets are frequently prepared for training and only later adapted for evaluation. This creates a structural mismatch. When annotation is designed with evaluation in mind, it enables comparability, benchmarking, and continuous alignment. Without this, models may improve in isolated metrics while degrading in real-world performance.

Where PECAT sits in the AI lifecycle

PECAT sits right at the heart of the human intelligence layer. This layer emphasizes on human-governed workflows for data annotation, alignment, evaluation, and anonymization - ensuring traceability, quality control, and consistent data operations across the AI lifecycle.

Datasets for AI

Training data, multilingual corpora, speech, image, video, and data preparation layers for model adaptation and evaluation.

Model Alignment & RLHF

Human feedback, preference ranking, policy-aware review, and alignment workflows that shape model behavior before release.

Evaluation and AI QA

Benchmark design, multilingual QA, regression testing, scoring, and validation frameworks for dependable AI release cycles.

Building Sovereign AI Systems

Task-specific models, fine-tuned LLMs, RAG, orchestration, and deployment design for enterprise and regulated environments.

ECO Intelligence Platform

The orchestration environment where evaluated and aligned models, multilingual workflows, retrieval systems, and enterprise AI applications become operational.

Quality defined by world-class operational standards

PECAT's proof of operational excellence that ensures annotation remains consistent, secure, and reliable in production environments.

Annotation becomes infrastructure when it is governed

PECAT supports organizations moving from isolated datasets to controlled data operations, where annotation, evaluation and deployment remain aligned across multilingual and regulated environments.

Private cloud, on-premise, air-gapped deployment, multilingual workflows, human-in-the-loop evaluation and operational AI built for regulated environments.

9 min read

Tokens are the new coal… for “Captive AI”?

Manuel Herranz: May 10, 2026

7 min read

Best AI Training Data Providers in 2026

Yash Dhobale: May 2, 2026