Speech Data as Infrastructure for Audio Native AI

At Pangeanic, speech dataset creation and processing are approached as an infrastructural discipline for audio native AI. From multilingual speech collection to high fidelity transcription, speaker annotation, acoustic labeling, and quality review, each layer is designed to reduce noise and improve the reliability of training pipelines.

The result is not simply audio data. It is structured acoustic intelligence ready to support speech recognition, speech synthesis, speaker diarization, conversational AI, voice agents, and multimodal language models under real deployment conditions.

Creating speech datasets for a multilingual AI reality

Speech datasets rarely arrive ready for production AI. Audio is often fragmented, acoustically inconsistent, unevenly transcribed, and missing the linguistic detail needed for reliable model training. Pangeanic approaches speech dataset creation as an infrastructural discipline: the careful assembly of existing catalog data and fully custom corpora aligned with the operational needs of speech recognition, speech synthesis, voice agents, and multilingual audio systems.

What makes speech data difficult to prepare for AI systems?

Speech data pipelines often break down before model training begins. The main constraints are not only data volume, but also acoustic variation, transcription quality, annotation discipline, consent, licensing, metadata, and governance. What appears to be abundant audio can still contain weak supervision, limited traceability, and inconsistent signals for training.

Fragmented acoustic reality

Speech data comes from varied environments where background noise, microphones, accents, speaking styles, and recording conditions differ widely. Without controlled collection and quality review, models can inherit variability that weakens performance in production use.

Annotation as a bottleneck

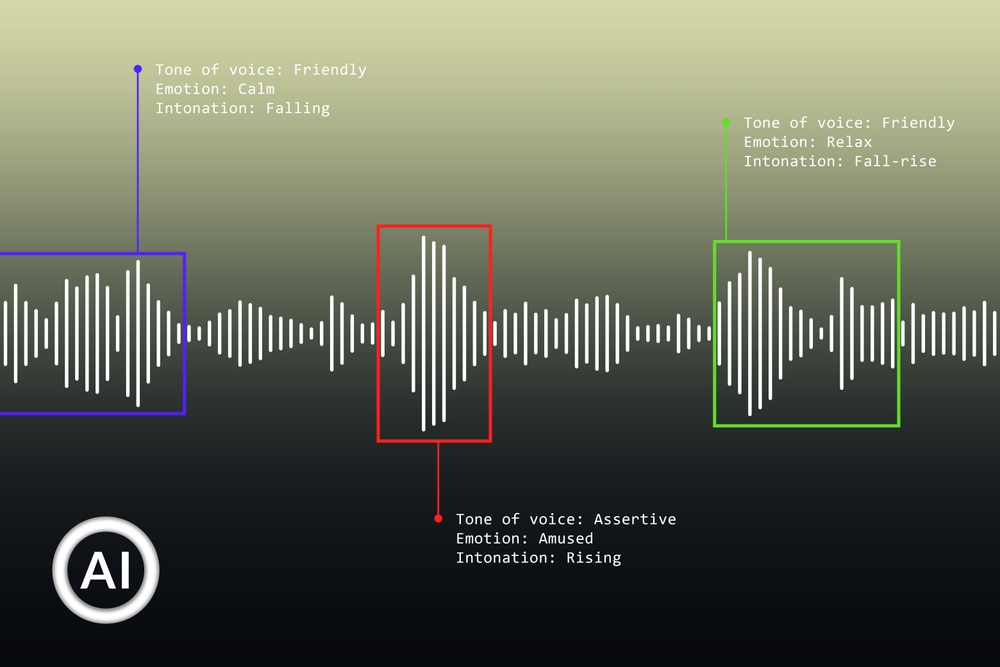

High fidelity transcription, speaker labeling, intent annotation, emotion labels, timestamps, and linguistic review require consistent guidelines and trained reviewers. Misaligned labels or superficial tagging introduce errors that compound across training and evaluation cycles.

Governance and traceability

Speech data can carry personally identifying signals, especially when voice is used for speaker identification or user recognition. Licensing, consent, provenance, retention rules, and audit trails are conditions for deploying audio models in regulated environments.

Sources: Mozilla Common Voice on multilingual speech datasets; Datasheets for Datasets on dataset documentation and transparency; and European Data Protection Board guidance on voice data and biometric identification. Mozilla Common Voice, Datasheets for Datasets, EDPB voice assistant guidance.

How does governed speech data collection work?

Pangeanic structures speech data collection as a controlled, ethical, and linguistically grounded operation. Acquisition, annotation, consent, licensing, metadata, and quality review are managed together so that speech datasets can support speech recognition, speech synthesis, voice agents, and conversational AI in production environments.

Ethical data collection

Speech datasets can be collected through PECAT, dedicated applications, and controlled contributor workflows where participants record guided prompts. Collection should include documented consent, clear licensing, contributor instructions, data provenance, and review steps before the audio is used for model training.

Spontaneous speech capture

Beyond scripted audio, spontaneous speech can be collected and transcribed to capture natural pauses, interruptions, accents, repairs, informal wording, and conversational variation. This helps train systems that must operate beyond clean laboratory conditions.

Less represented language sourcing

Speech data for less represented languages requires controlled sourcing, local linguistic knowledge, speaker diversity, and legal clarity. The goal is to improve language inclusion while maintaining audio quality, transcription accuracy, and responsible data use.

Phonetic coverage

Speech datasets should cover the sound patterns, pronunciation variants, and phonetic distribution of each language. This supports more robust speech recognition, more natural speech synthesis, and better performance across accents, speakers, and recording contexts.

Wake word and command sets

Voice activated systems need trigger phrases, commands, and intent examples recorded across speakers, demographics, accents, and acoustic conditions. This helps reduce false rejection, improve responsiveness, and support safer interaction with voice agents.

Acoustic environment diversity

Audio data should include varied microphones, distances, rooms, background noise, and recording devices. This helps models recognize speech reliably in realistic settings, from quiet offices to mobile, vehicle, call center, and public environments.

Sources: Mozilla Common Voice on multilingual speech collection; Datasheets for Datasets on dataset documentation and transparency; and European Data Protection Board guidance on voice data, consent, and responsible data processing. Mozilla Common Voice, Datasheets for Datasets, EDPB voice assistant guidance.

What specifications define production speech data for AI?

Pangeanic supports speech data collection, transcription, annotation, metadata preparation, and governed delivery for each project’s model requirements. Speech datasets can be structured for automatic speech recognition, speech to text, text to speech, call center analytics, automotive voice assistants, multilingual voice agents, and multimodal AI systems.

| Capability | Specifications | |

|---|---|---|

| Audio use cases | • Automatic speech recognition • Speech to text • Text to speech |

• Call center voice analytics • Automotive voice assistants • Multilingual voice agents |

| Audio file formats | • WAV • FLAC |

• MP3 • NIST SPHERE |

| Sampling rates | • 8 kHz for telephony data • 16 kHz for common speech recognition workflows |

• 44.1 kHz to 48 kHz for high fidelity audio and speech synthesis |

| Bit depth | • 16 bit • 24 bit |

• 32 bit floating point audio when required |

| Channel configuration | • Mono • Stereo |

• Multichannel audio for beamforming and microphone array use cases |

| Metadata fields | • Environment: studio, office, public, or natural setting • Device and channel: telephone, mobile, headset, or microphone array • Speaker attributes when legally permitted |

• Audio duration, bitrate, and sampling rate • Noise and signal to noise conditions • Linguistic data such as domain, intent, sentiment, and entities |

| Annotation types | • Word and phoneme time stamps • Sentiment and intent labels • Entity annotation |

• Speaker diarization • Speaker turns • Acoustic event labels |

| Compliance and governance | • GDPR aligned processing • CCPA aware workflows • ISO IEC 27001 information security controls |

• Consent records • Licensing and provenance • Retention, access, and audit rules |

| Compression parameters | • Configurable encoding settings • Format selection by model requirement |

• Delivery aligned with downstream training, evaluation, or deployment constraints |

Sources: Mozilla Common Voice on multilingual speech datasets; NIST SPHERE file format documentation; ISO IEC 27001 information security management; and European Data Protection Board guidance on voice data and responsible data processing. Mozilla Common Voice, NIST SPHERE, ISO IEC 27001, EDPB voice assistant guidance.

How do speech data workflows become operational AI systems?

Pangeanic’s speech data pipelines have been deployed in environments where transcription accuracy, multilingual consistency, accessibility, and regulatory control are structurally required. These use cases show how speech data collection, transcription, speaker processing, human review, metadata enrichment, and privacy controls can become operational infrastructure for public institutions and AI systems.

Valencian Parliament

Human reviewed transcription and subtitling workflows help preserve verbatim accuracy across Spanish and Valencian. Validation steps support institutional tone, accessibility, and searchable legislative archives.

Spanish National Parliament

AI supported transcription with expert linguistic validation helps process parliamentary discourse across Spanish, Catalan, Galician, and Basque while preserving legal integrity, traceability, and institutional precision.

Speech data processing at scale

More than 2,000 hours of multilingual audio were processed through segmentation, timestamps, transcription, speaker diarization, named entity annotation, personal data anonymization, and metadata enrichment.

Sources: Pangeanic use cases on Valencian Parliament transcription, Spanish National Parliament multilingual transcription, and comprehensive speech data processing for AI. Valencian Parliament, Spanish National Parliament, speech data processing for AI.

How PECAT turns speech data into governed AI training signals

From raw audio to structured acoustic intelligence, PECAT supports speech data annotation, transcription review, metadata preparation, quality control, and governed delivery for multilingual AI systems.

PECAT platform for speech data collection and annotation

PECAT structures speech data workflows as a controlled environment where collection, transcription, annotation, validation, metadata, and quality review remain aligned. The result is not a set of isolated audio files, but a governed data pipeline designed for speech recognition, speech synthesis, voice agents, and multilingual AI systems.

Speech data collection

PECAT supports distributed speech data acquisition through web and mobile workflows, helping teams expand language coverage, manage contributors, and capture acoustic variation across speakers, locations, devices, and recording environments.

- Contributor recruitment and management across regions, languages, and speaker profiles

- Guided recording workflows through mobile and web interfaces

- Live monitoring of task progress, audio quality, and completion status

- Controlled collection aligned with language, domain, consent, and licensing requirements

- Metadata capture for language, locale, device, channel, environment, and project context

Speech data annotation

Speech annotation in PECAT operates as a validation layer where transcription, segmentation, timestamps, speaker information, linguistic labels, and quality control are integrated into one reviewable workflow.

- Transcription and segmentation of audio into structured units

- Speaker diarization, speaker turns, and timestamp preparation

- Human review for linguistic accuracy, terminology, accents, and context

- Validation combining automated checks and expert oversight

- Traceability across annotation decisions, revisions, reviewers, and quality reports

Sources: Pangeanic PECAT platform information and Pangeanic speech annotation information. PECAT platform, speech annotation services.

FAQ

Speech data ingestion for production AI systems

These questions explain how speech datasets are collected, transcribed, annotated, reviewed, and governed before they can support speech recognition, speech synthesis, voice agents, conversational AI, and multilingual audio models.

Why does speech data quality constrain model performance?

Speech data quality constrains model performance because audio models learn from acoustic signals, transcripts, labels, and metadata. Background noise, inconsistent microphones, weak transcription, missing speaker labels, and poor metadata reduce signal reliability. More data cannot compensate for poorly structured training evidence.

How does annotation shape speech model behavior?

Annotation defines the learning signal for transcription, speaker diarization, timestamps, intent labels, sentiment labels, acoustic events, and linguistic metadata. Accurate and consistent annotation helps models interpret speech patterns, separate speakers, identify meaning, and generalize across languages, accents, and recording environments.

Why is human review still required in speech data pipelines?

Human review is still required because automated pipelines cannot fully resolve accents, overlapping speech, ambiguous words, domain terminology, speaker changes, or contextual nuance. Expert validation helps correct errors, resolve disagreement, and keep outputs aligned with operational, linguistic, and regulatory requirements.

What metadata should speech datasets include?

Speech datasets should include metadata for language, locale, speaker attributes when legally permitted, recording environment, device type, channel, duration, sampling rate, noise conditions, consent status, licensing, domain, task, and annotation guidelines. Metadata improves traceability, filtering, evaluation, and responsible reuse.

When should an enterprise build a custom speech dataset?

An enterprise should build a custom speech dataset when public or catalog data does not match its languages, accents, acoustic conditions, industry terminology, privacy requirements, voice agent workflows, or evaluation needs. Custom speech data is especially useful for call centers, automotive systems, public services, health care, media archives, and regulated sectors.

How does Pangeanic differentiate in speech data solutions?

Pangeanic structures speech data collection, transcription, annotation, validation, metadata preparation, and governance as a single operational system. Human expertise, PECAT workflows, linguistic review, privacy controls, and quality management processes work together to support traceability, multilingual consistency, and production use.

Sources: Mozilla Common Voice on multilingual speech datasets; Datasheets for Datasets on dataset documentation and transparency; NIST SPHERE documentation; and European Data Protection Board guidance on voice data and responsible data processing. Mozilla Common Voice, Datasheets for Datasets, NIST SPHERE, EDPB voice assistant guidance.

Quality governed by recognized operational standards

Pangeanic’s speech data, language, and AI data operations are supported by certified management systems for quality, translation services, information security, medical device quality processes, and human review of machine translation output. These standards help ensure that PECAT workflows for speech collection, transcription, annotation, review, and governed delivery remain consistent, secure, auditable, and reliable in production environments.

Sources: ISO information on quality management, translation services, information security, medical device quality management, and full human review of machine translation output. ISO 9001, ISO 17100, ISO IEC 27001, ISO 13485, ISO 18587.

SPEECH DATA AT SCALE

Speech datasets become operational when they are structured, reviewed, and governed

Pangeanic supports speech data collection, transcription, translation, annotation, metadata preparation, and governed delivery through PECAT. From controlled acquisition to validated datasets, each workflow is aligned with language coverage, acoustic conditions, model objectives, consent requirements, and production AI constraints.

Explore related datasets

Multilingual collection, transcription review, speaker annotation, structured metadata, consent records, and governed delivery pipelines designed for production AI environments.

9 min read

Tokens are the new coal… for “Captive AI”?

Manuel Herranz: May 10, 2026

7 min read

Best AI Training Data Providers in 2026

Yash Dhobale: May 2, 2026