Noise Data as a Foundation for Robust Multimodal AI Systems

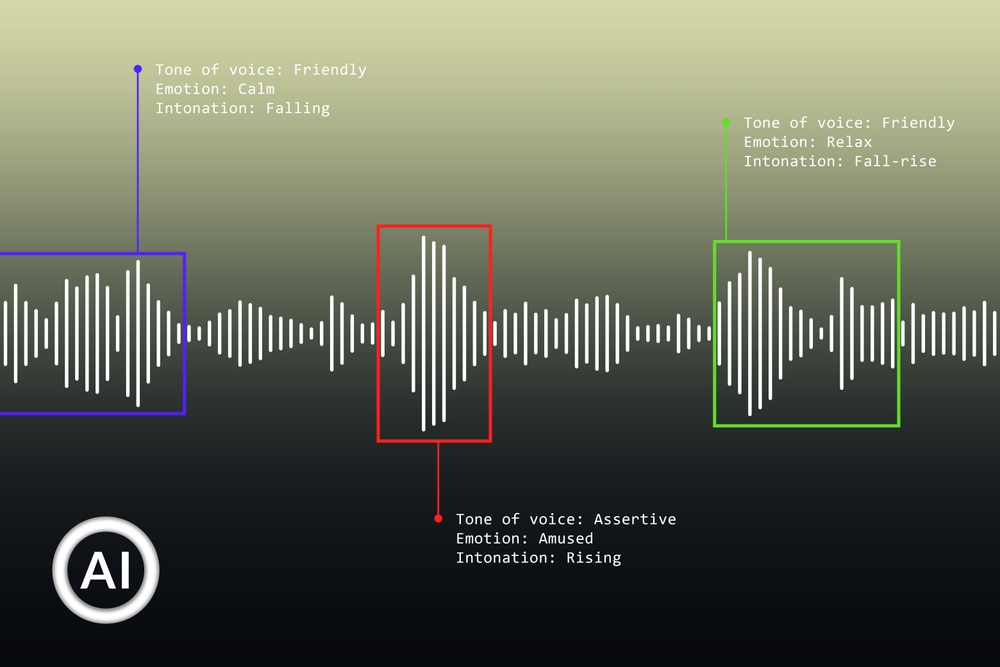

At Pangeanic, noise dataset creation & processing is approached as an infrastructural discipline: From multilingual speech collection to high fidelity transcription and annotation, each layer is designed to reduce entropy in training pipelines.

The result is not simply data, but structured acoustic intelligence ready to support speech recognition, synthesis and conversational models under real deployment conditions.

Refining Noise Datasets for a Multimodal Reality

Noise datasets are structured collections of environmental and acoustic signals used to train AI systems to operate beyond controlled conditions. They introduce variability that models must learn to interpret, filter or ignore.

From speech recognition and voice assistants to automotive systems and surveillance analytics, noise data enables AI to function reliably in real-world environments where clarity is rarely guaranteed.

Noise is not uniform. It varies across environments, devices and contexts, making its representation and control a non-trivial task in AI training pipelines. It interacts with the signal itself, often overlapping with speech and altering meaning rather than remaining a separable layer.

Device characteristics and recording conditions further shape how noise is captured, introducing variability that models must learn to accommodate. Over time, noise evolves with changing environments, requiring datasets that reflect temporal dynamics rather than static acoustic snapshots.

Acoustic Variability

Noise differs across locations and devices, making consistency difficult and introducing instability in model performance.

Labeling Ambiguity

Defining and categorizing noise types requires context-aware annotation, often blending subjective and technical criteria.

Context Dependence

The same sound may represent noise or signal depending on use case, complicating dataset design and evaluation.

Noise Data, Structured for Real Deployment

Pangeanic approaches noise datasets as controlled inputs, where collection, annotation and acoustic variability are aligned with model objectives.

Real-World Noise Capture

Data collected across environments such as urban, industrial and domestic contexts.

Controlled Annotation

Noise classification aligned with downstream tasks including filtering, recognition and detection.

Multilingual Context

Noise integrated with speech datasets for training conversational AI systems.

Noise Datasets Structured Across Real-World Environments

From controlled indoor signals to rare and high-intensity events, Pangeanic structures noise datasets to reflect how environments behave in practice, not in isolation.

Home & Indoor Environments

- Human activity sounds: breathing, coughing, laughter, footsteps

- Appliance cycles: washing machines, kettles, vacuums

- Safety alerts: alarms, glass breaking, doorbells

- Object interaction: keys, doors, dishes, water, paper

Industrial & Work Environments

- Construction: drills, cranes, hammering, welding

- Factories: conveyor belts, robotics, forklifts, alarms

- Office environments: typing, calls, printers, movement

- Regional workplace acoustics across global settings

Mobility & Vehicles

- Cars: engines, doors, seatbelts, horns

- Public transport: metros, buses, trains

- Emergency and special vehicles: sirens, motorcycles, bicycles

- Marine and air: ferries, aircraft, drones

Global & Specific Environments

- Urban ambiance: markets, metros, traffic patterns

- Transport hubs: airports, stations, crowd movement

- Weather: rain, wind, thunder variations

- Regional fauna: birds, insects, animals

Commercial & Public Spaces

- Retail: scanners, carts, registers, vending machines

- Hospitality: cafés, kitchens, restaurant chatter

- Recreation: arcades, cinemas, sports venues

- Institutional: schools, libraries, hospitals

Extreme / Rare Scenarios

- Disasters: earthquakes, floods, wildfires

- Crowd events: protests, concerts, stadiums

- Military and security: gunfire, helicopters, drones

- High-intensity, low-frequency acoustic conditions

Explore Pangeanic Data offerings

Pangeanic´s data capabilities span multiple modalities. Each dataset type introduces different constraints, annotation requirements, and deployment patterns.

SPEECH

Speech datasets

ASR, TTS, multilingual audio, and annotated speech corpora for enterprise AI systems.

IMAGES

Image datasets

Annotated visual datasets for classification, detection, OCR, and multimodal AI.

TEXT

Monolingual LLM data

Large-scale corpora for domain adaptation, pre-training, and enterprise language models.

From raw audio to governed training signals, this is how noise becomes operational intelligence through our proprietary PECAT Data Annotation platform

FAQ

Understanding Noise Data implications in Real-World AI Deployment

Why are noise datasets essential for training robust AI systems?

Noise datasets expose models to the unpredictability of real-world environments, allowing them to generalize beyond clean or ideal data conditions. Controlled exposure to noise improves robustness and helps systems perform reliably when inputs are imperfect or degraded.

What risks arise if noise is not properly represented in training data?

Unstructured or unmanaged noise can distort learning signals, reduce accuracy and lead models to misinterpret patterns. Poor handling of noise often results in overfitting or unreliable outputs when deployed in real-world scenarios.

How do noise datasets support real-world sound detection and classification?

Noise datasets enable models to identify and differentiate environmental sound events with precision, even under variable acoustic conditions. They are critical for applications such as sound event detection, surveillance and environmental monitoring.

Why is balancing noise exposure important in AI training?

While noise improves generalization, excessive or poorly structured noise can degrade performance by overwhelming the underlying signal. Effective datasets balance variability and clarity, ensuring models learn meaningful patterns rather than random distortions.

Quality defined by world-class operational standards

PECAT's proof of operational excellence that ensures annotation remains consistent, secure, and reliable in production environments.

NOISE DATA AT SCALE

Noise becomes usable when it is structured, controlled and contextualized

Pangeanic supports end-to-end noise data collection, classification and annotation through PECAT. From environmental capture to structured datasets, workflows remain aligned with real-world conditions, model objectives and deployment constraints.

10 min read

Why Palantir’s ontologies are its deepest (and dangerous) moat

Manuel Herranz: May 26, 2026

9 min read

Tokens are the new coal… for “Captive AI”?

Manuel Herranz: May 10, 2026